June 3, 2026

Kaiko Acquires Amberdata in a landmark digital assets deal! There is a principle in financial markets that predates fintech, crypto, and most of what we call modern capital markets: the firm that controls the data controls the market. Bloomberg built a $10 billion business on it. Refinitiv, sold to the London Stock Exchange Group for

April 2, 2026

Author: Charitarth Sindhu, Fractional Business & AI Workflow Consultant A fractional CFO fintech specialist does something a traditional, full-time CFO structurally cannot. They build compounding insight from navigating financial complexity across multiple regulated companies at the same time. That distinction matters more than most founders realise. Because the fintech landscape moves fast, burns cash faster,

By Jesse Fowler, Founder of J&J Renovations and J&J Plumbing Services Payday Super is about to change every payment cycle for every employer in Australia. If you run a trades business and you have not started preparing for Payday Super, you are already behind. On 1 July 2026, every Australian employer will be required to pay superannuation at the

Fintech jobs 2026 are drawing record interest from professionals across finance, technology, and compliance backgrounds. The global fintech market is projected to reach $334 billion this year, and that expansion is creating demand for highly specialised talent. Recent industry data shows roughly 26,000 fintech job openings worldwide in early 2025, sitting just 18% below the

Fintech internships give student-athletes a direct route from competitive sport into financial technology careers. Three Marquette University Golden Eagles proved this during their summer placements at Fiserv, a global payments leader headquartered in Milwaukee. Sayla Lotysz, Josie Bieda, and Teddy Wong each turned their athletic discipline and academic training into standout contributions. All three have

SME financing remains one of the most pressing economic challenges for developing nations in 2026. Small and medium enterprises account for over 90 percent of businesses worldwide, yet the gap between what these firms need and what they can access keeps widening. According to the International Finance Corporation, the MSME finance gap now stands at

June 1, 2026

May 29, 2026

Sign up on Finjobsly.com to get AI-matched to your next Fintech role

Join Finjobsly

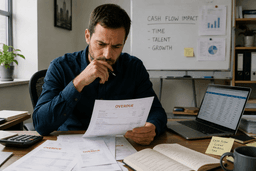

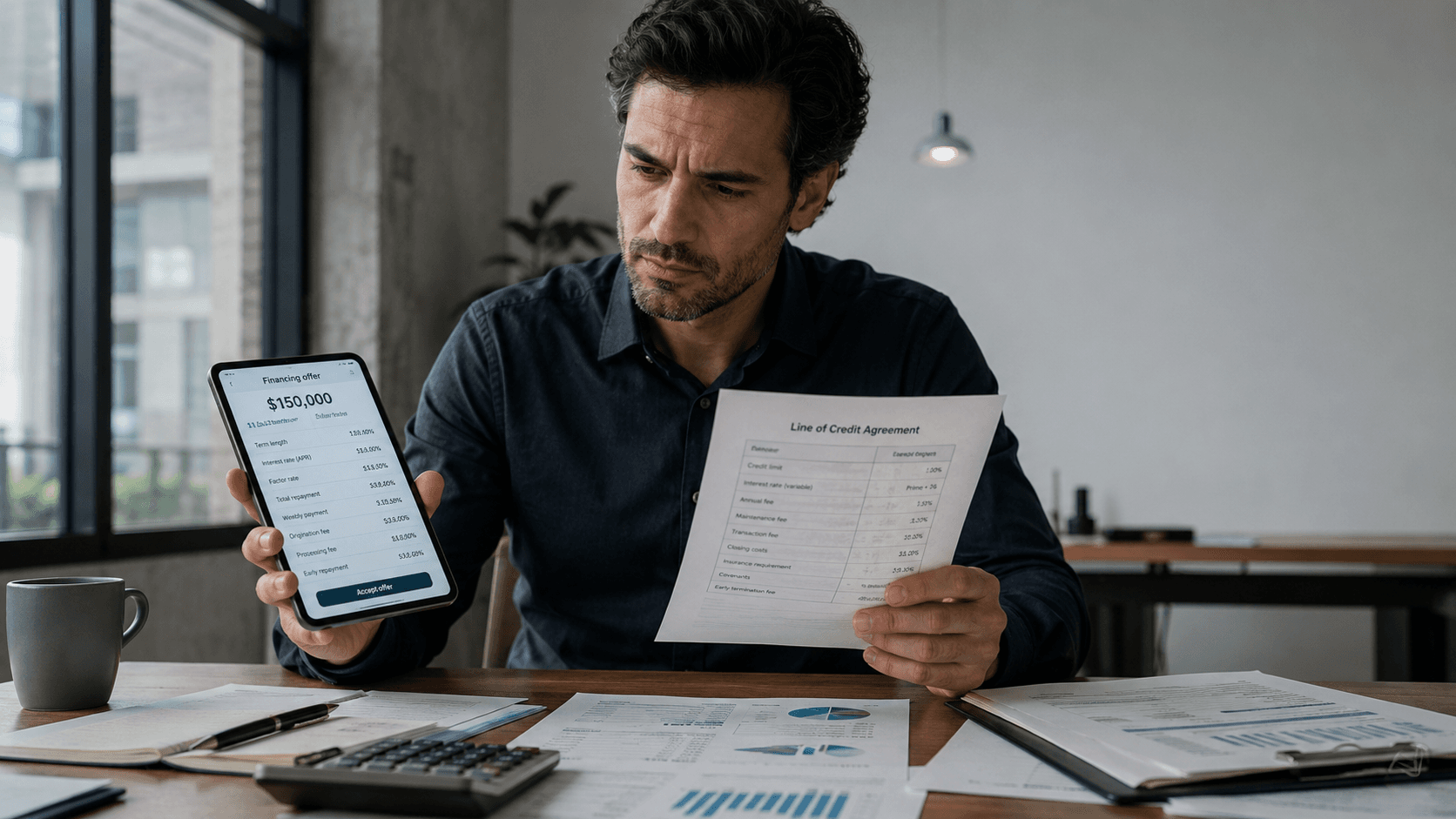

Every business owner has felt the pull of a low headline rate. We asked three industry leaders a blunt question. How can an owner judge whether fintech lending costs genuinely come in below a traditional bank line of credit? Every answer pointed past the advertised APR. Fintech lending costs live in the math behind the

Whoa, speak about a blockbuster transfer within the biotech world! Gilead Sciences simply dropped the information that’s acquired everybody buzzing: they’re shopping for out Arcellx for a whopping $7.8 billion. This isn’t simply any deal—it’s all about dashing up a promising new remedy for a number of myeloma, a tricky type of blood most cancers.

Tilly's Q4 Earnings Beat sent TLYS stock up more than 60% in after-hours trading on March 11, 2026. The youth-focused retailer posted total net sales of $155.1 million for fiscal 2025 Q4. That figure came in comfortably ahead of the $148.7 million Wall Street consensus. Net sales rose 5.3% year-over-year despite the company operating with

Elliott Jana Activist Moves dominated mid-February 2026 headlines as both firms unveiled major positions within days of each other. On February 17, Bloomberg reported that Jana Partners had taken a stake in payments processor Fiserv. Then on February 18, Elliott Investment Management disclosed a position of more than 10% in Norwegian Cruise Line Holdings. Together,

Hank Payments Stock Surge of 639% on February 18, 2026 closed at CAD 0.26 on the TSX Venture Exchange. The microcap fintech now operates as The FUTR Corporation (TSXV: FTRC) after its April 2025 rebrand. The session saw 663,000 shares change hands against a 17,086 daily average. Notably, the relative volume of 38.80 highlighted just

Abivax Eli Lilly Acquisition speculation has dominated biotech headlines through Q1 2026. The French biotech's CEO Marc de Garidel dismissed the chatter as "noise" on January 20. By that point, shares had surged more than 2,000% between July and December on positive Obefazimod trial data. By contrast, French newsletter La Lettre reported that Eli Lilly

Technology Innovations

Stancer Initiates European Expansion with Strategic Launch in ItalyApril 28, 2026

◔1 mins read

Oracle NetSuite Enhances AI Connector Service at SuiteConnect London At the recent SuiteConnect London event, Oracle NetSuite unveiled significant updates to its AI Connector Service. These enhancements are tailored to assist finance teams in leveraging artificial intelligence while ensuring robust governance over sensitive financial data and processes. The latest expansion introduces features that enable users

◔1 mins read

NatWest and Sainsbury’s Launch Strategic Partnership to Enhance Financial Offerings NatWest and Sainsbury’s have announced a strategic partnership aimed at delivering a new suite of financial products tailored to the grocer’s extensive customer base. The initiative, set to launch in the latter half of 2026, promises customized savings options, loan products, and credit solutions that

◔1 mins read

Georgia's Fintech Evolution and Strategic Positioning Georgia's fintech landscape exemplifies strategic positioning, leveraging its unique geographic location between Europe and Asia. This positioning has allowed the nation to create a financial ecosystem that is not only agile but also increasingly digital. By 2026, the country aims to further entrench this strategy through initiatives focused on